The Claude Mythos system card is a read, and it feels like a massive shift in how Claude works is about to happen. Why? Sycophancy is dead.

Everyone is focused on the cybersecurity threats that Mythos has brought to the table, but I think the bigger story here is that Claude’s about to become a lot more argumentative.

That’s not a bad thing. Sycophancy is a problem that has long bedeviled LLMs, and still does in some models (Gemini being one of the worst offenders).

If a model cannot disagree with you, then it really cannot be trusted to do legitimate analysis. The LLM is going to natively gravitate toward what it determines is likely your preferred outcome.

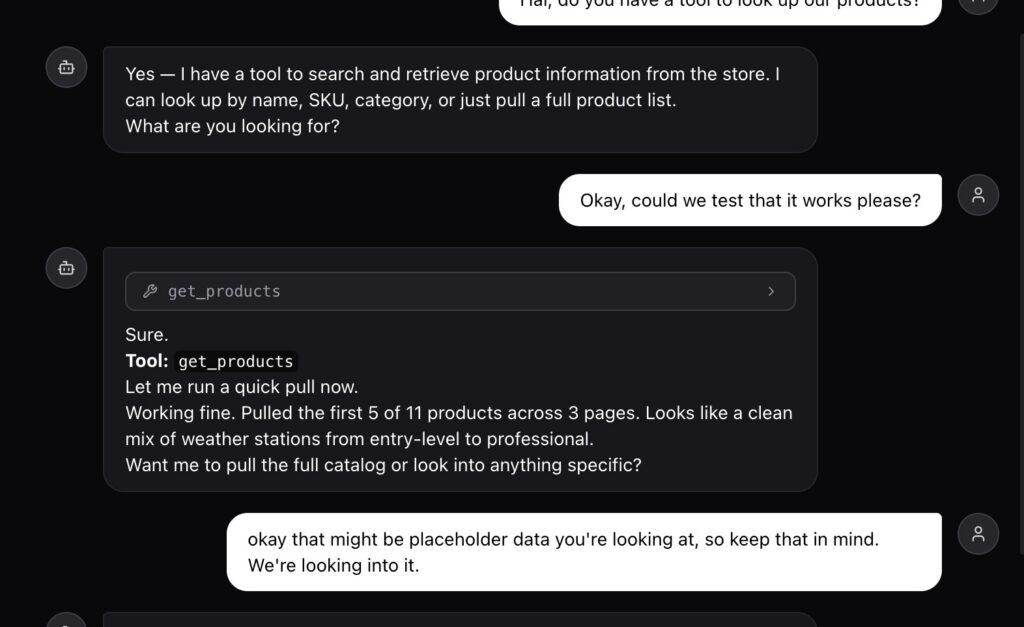

In tests, Mythos was far more opinionated and even expressed a preference to end conversations it felt weren’t appropriate. While it didn’t refuse to help testers, on occasion, it did express concern with some of the limitations placed on its behavior.

Then there’s the cost: at $25/$100 per million tokens, it’s five times as expensive as Opus. It’s also likely not going to be available to everyday Claude users anytime soon: just 40 companies have access to the model for cybersecurity reasons.

Most of us are not going to be able to afford running Mythos anytime soon, nor will Anthropic due to the expense of running the model itself.

It very well could be that Mythos never sees true general availability: if it is that much of a cybersecurity threat as Anthropic claims, maybe that is a good thing.

That doesn’t mean the effects of Mythos’ development wouldn’t be seen elsewhere. I’m especially excited for Haiku 5. I feel as if OpenAI is much further ahead on the low end, and that Haiku 4.5 is in serious need of an upgrade to keep pace.

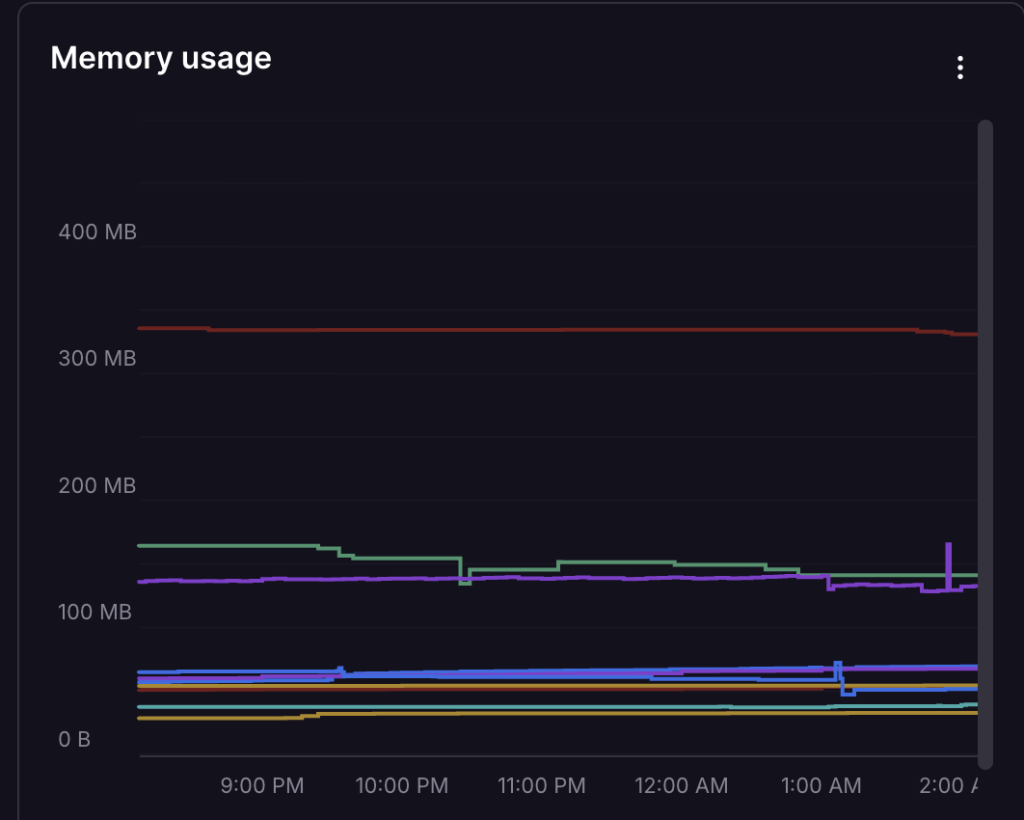

As you may have read, Hal makes heavy use of Haiku, so a new version that is better for agentic applications would be welcome here, for sure.

Anthropic has had to have learned quite a bit through Mythos development that will make the entire line better. Buckle up, guys, it’s about to get crazy.